»A Little History

Puppet has been around for 13 years and has gathered a substantial following for various software configuration use cases. Puppet can be used for configuration management purposes such as configuring MongoDB clusters or automating failovers while using Nginx as a load balancer. Puppet has developed a strong following, pioneering the concept of treating server configuration as code, and deploying it across a large estate.

»Loose Lips Sink Ships

However, one of the main risks with using Puppet is the fear of secret sprawl. Unsurprisingly, when you start managing your servers like applications, you start to manage things like SSH keys, PKI certificates, passwords and API tokens. The thought of accidentally leaking these secrets across distributed clouds, systems, and applications creates a low trust network with serious security risks.

So, a number of people came up with solutions for keeping secrets in Puppet code, with the most popular being hiera-eyaml. But onboarding new people and managing the keys for eyaml can be a pain, as well as having a lack of auditability of what servers are using what secrets.

If only there was a better way...

»Enter HashiCorp Vault

Vault is a great solution for centrally managing secrets in Puppet. This moves the secrets problem away from the operator and the difficulties of encrypting data in transition and at rest into a dedicated service, and gives you the benefits of running a dedicated secret service, such as auditability, fine grained ACL's, and the ability to use a GUI for configuring and rotating secrets.

»Using Hiera as a Backend

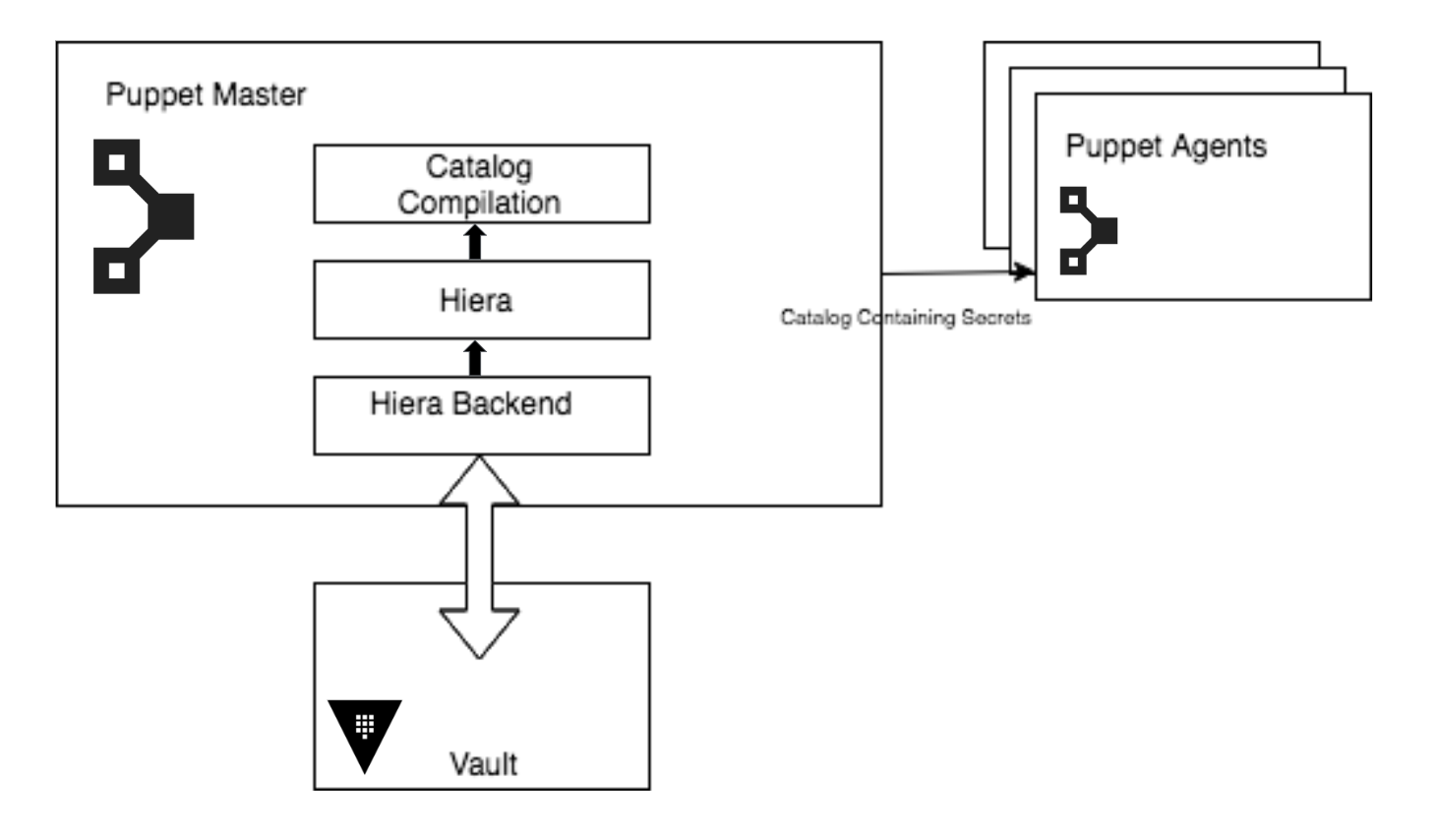

In the demo from the webinar, we showed a basic example of using Vault as a backend for secret storage via Puppet's Hiera lookup tool. This means that secrets are fetched at catalog compile time, via a connection to Vault, and then the information is fed into Hiera in the normal workflows you would use with Puppet.

It's not the only way of using Vault with Puppet, but it's an efficient way of integrating using existing Puppet infrastructure.

»Demo Environment

For those who were unable to attend the live webinar recording, we explain it briefly here.

The architecture looks roughly like the following:

There's a little setup involved, as the Vault gem requires a newer version of Ruby (> 2.0.0), and older Puppetserver ships with JRuby ships with Ruby 1.9.1, which will cause some issues.

This can be fixed by configuring Puppetserver to use the new JRuby 9K jar, which uses a newer version of Ruby which will avoid this issue. If you're using Puppet Enterprise, this can be configured with by setting the Hiera key for the JRuby setting like so:

puppet_enterprise::master::puppetserver::jruby_jar: "/opt/puppetlabs/server/apps/puppetserver/jruby-9k.jar"

Puppet Enterprise 2018.1 which sets JRuby to 9k by default, and the Puppetserver 6.0.0+ releases will remove the older JRuby entirely and switch to JRuby 9k.

The code used to bootstrap this process is avaliable on GitHub (Pull-requests welcome!).

When a catalog is requested by an agent, any lookup that matches the key restrictions (i.e. it contains Vault or has the word password inside it), the Vault backend will reach out to Vault and see if there is a secret under the secret backend under the system name. If so, it will return that value and that will be used by the Puppet resource.

»Questions from the Webinar

The live demo had too many questions for us to answer in the time provided so we're following up with the answers below.

Questions:

- How do you protect data from handmade manifests from unprivileged users?

The scope of the data that someone can get from Vault is determined via the lookup hierarchy. In the demo, we locked this down to the trusted certname of the machine in question. Trusted facts are stamped on an agent at the Puppet certs signing stage, meaning that you could only get access to secrets scoped to the certname (usually the fully qualified domain name of the machine). The certname is also unique. So for example, if you have a production database with the fqdn of prod-db-1.example.com, and someone tried to make another spoof machine with the intention of getting the production db credentials, the original node would have to have its certificate revoked and purged; then the new machine would have to create a signing request and have it approved. So in this case, the secure introduction is at the Puppet CA level, so make sure that it's properly secured. This can be done with policy-based auto signing.

Also, if you use the Sensitive function for any secrets, the information will be hidden from Puppet reports, so the only way to get direct access to the secret would be to have access to the machine and the requisite permissions to those files on disk. At that point, it exposes your data to vulnerabilities regardless of Vault or Puppet intervention!

- Does this solution work on a Windows server?

It depends which part is Windows!

Puppetserver is a unix only application.

The Puppet agent can run and is fully supported on modern Windows nodes. The process is the same, so the Hiera lookup with Vault can be used to fetch secrets to utilize with a Windows agent, such as the credentials for an IIS server.

Vault itself can be run on a Windows machine, but we recommend using a linux environment to run the Vault service, as that's what the majority of the community is doing, as well as what we use internally at HashiCorp.

- Is there a way to avoid putting a Vault token in plain text in hiera.yaml? Isn't it a potentially big security risk to have the token in clear text in hiera.yaml? What are the ways to mitigate it?

This is a very valid question. This can be set via an environment variable rather than by a plain text token in hiera.yaml:

Address: The address of the Vault server, also read as ENV["VAULT_ADDR"] \

Token: The token to authenticate with Vault, also read as ENV["VAULT_TOKEN"]

So, you can set the token using an environment variable in the Puppetserver startup config:

- For RHEL/CentOS, /etc/sysconfig/puppetserver.

- For Debian/Ubuntu, /etc/default/puppetserver.

We will add an example in the GitHub repo of doing this via an environment variable.

- Do you have any metrics or info on performance impact (for hiera based approach) , especially where you have many classes with many params?

We don't have any specific metrics on performance impact, but it is one of the reasons the confine_to_keys setting is available, to specify a regular expression to limit Vault lookups, which should prevent a lot of the performance impact of the Vault lookups.

If anyone has any metrics or solid numbers around this, feel free to reach out!

- Is there any support for other kinds of Vault secret backends other than generic key/value? In particular I'm interested in the PKI backend.

Right now, the davealden/hiera-vault is only for the k/v backend, but it would be possible to write Puppet functions that interact with the other Vault backends, such as the PKI backend. These would be run on the Puppetserver at compile time, and could be done to create certificates on disk.

If anyone has plans to do this, reach out to me, we'd love to help!

- Can you talk about auditing secrets and version control on change?

This was discussed in Nice and Secure: Good OpSec Hygiene With Puppet! at PuppetConf 2016. You an audit your existing code repo with tools like Trufflehog, Gittyleaks or just shell regexes. You could also ensure your pull-request process has a human reviewers, or use CI/CD jobs, or pre-commit hooks to try and detect leaking of credentials.

- Are there any advantages to using Jerekia to talk to Vault?

We've interacted extensively with Craig Dunn, a well known member of the Puppet open-source community on this topic; he also wrote a great blog post Managing Puppet Secrets with Jerakia and Vault. We recommend reaching out to him for more information.

- Is it possible to lock down the token used in hiera so only the puppet master can use it?

In terms of the token, this can be achieved with Vault Sentinel, which is a Vault Enterprise only feature. With a Role Governing Policy (RGP), you could restrict use of the Puppet namespace to certain ipaddress range. Some examples are documented here.

On a system level, you could restrict access to Vault with standard tools like firewalls.

- What's Consul and why is it recommended for production Vault deployments?

"Consul is a highly available and distributed service discovery and KV store designed with support for the modern data center to make distributed systems and configuration easy." - Consul

The Consul is the recommended backend for any production Vault deployment because it's distributed nature and HA features. In addition to providing durable storage, the Consul backend will also register Vault as a service in Consul with a default health check. Also, unlike some Vault backends, the Consul backend is officially supported by HashiCorp.

Read here for more on using Consul as a backend.

- If we're running on AWS, can we use the AWS auth on the Puppetserver so that we won't have to put the token in yaml or as an environment variable?

As the backend starts now, no. It assumes a given AppRole or specific token. But it could be added as a new feature.

- A usual question is when using Consul as a Vault backend then there is a chicken and egg issue on how you keep Consul secrets when Vault depends on it. Any sharable experience on that combination of Puppet, Consul and Vault?

The information kept in Consul for Vault is fully encrypted, Consul treats it as any other data and doesn't have to worry about leaks as it's not plaintext.

- If I have a suite of applications, instead of integrating vault directly in every app, how about creating a single microservice which only does the secret management and interacts with Vault for any service on my cloud?

This is a perfectly valid option, and a few people have done this. BanzaiCloud wrote a tool specifically for Vault interactions in Kubernetes: Banzaicloud's GitHub

- Seems like David Aldean's hiera_vault Puppet module restricts us to only looking up a secret with the key of "value." Is there a way to look up other key/value data in the same secret without having to change the default_field option in the hiera.yaml?

Currently no, but this could be implemented as a feature, maybe as a similar hierarchy as Hiera, where it looks through a hierarchical list of what fields it should lookup.

You can also specify the backend to read the entire JSON, then use Puppet functions to read the JSON as a hash and pull the field that you are interested in out. You could do this using the default_field_parse and setting it to json, allowing you to return the entire JSON blob of a secret, not just a single field.

This option is currently not documented, so I'm reaching out to David to add some README additions.

- Having installed the Vault UI, I am not able to issue a new token. Is this yet not implemented or am I doing something wrong?

Currently you cannot create tokens from the Vault UI. If this is a feature you're interested in, please open an issue in the Vault Github repo.

- If I would save token on disk in file, when puppetserver will read it? Each time for each host when I'll run "puppet agent -t" or it will cache it in memory?

The token will be read at Puppetserver startup and will be preserved until the service is stopped or restarted.

- Are there any security concerns to using the Filesystem backend, or are they just availability concerns?

No security concerns, the secrets are kept on the filesystem as ciphertext, it's purely the loss of the distributed features of using Consul, as well as having issues if the filesystem failed and there's no backup.