Inject secrets into Terraform using the Vault provider

Traditionally, developers looking to safely provision infrastructure using Terraform are given their own set of long-lived, scoped AWS credentials. While this enables the developer's freedom, using long-lived credentials can be dangerous and difficult to secure.

Operators need to manage a large number of static, long-lived AWS IAM credentials with varying scope.

Long-lived credentials on a developer's local machine creates a large attack surface area. If a malicious actor gains access to the credentials, they could used them to damage resources.

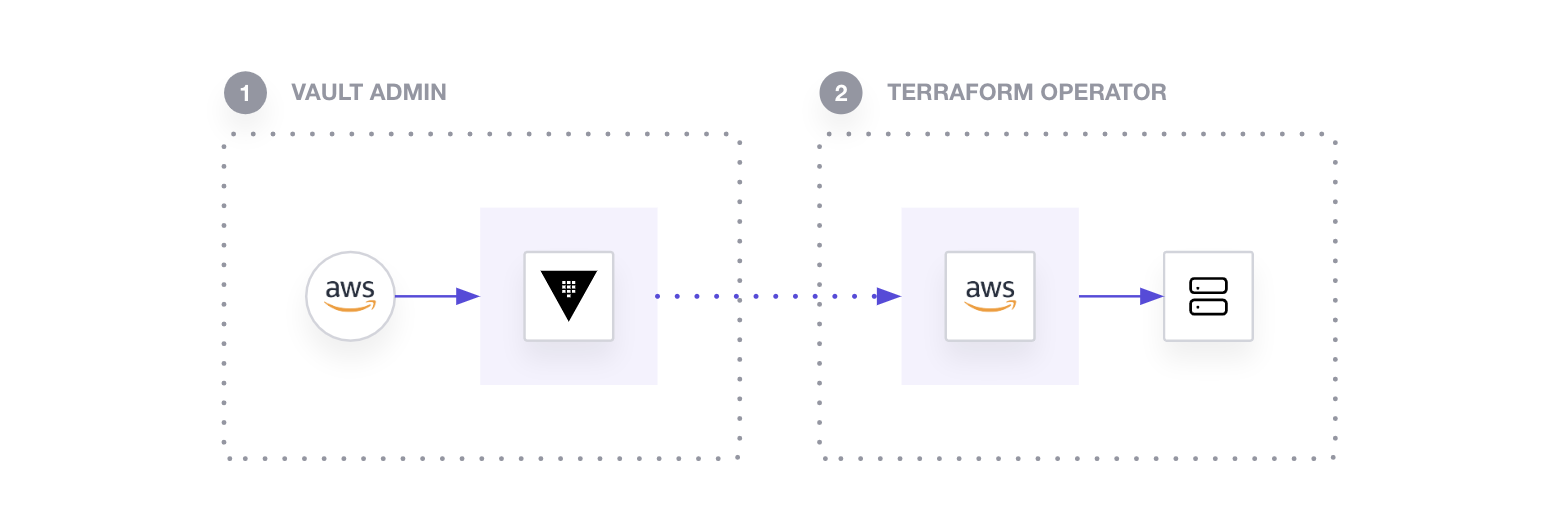

You can address both concerns by storing your long-lived AWS credentials in HashiCorp's Vault's AWS Secrets Engine, then leverage Terraform's Vault provider to generate appropriately scoped & short-lived AWS credentials to be used by Terraform to provision resources in AWS.

As a result, operators (Vault Admin) are able to avoid managing static, long-lived secrets with varying scope and developers (Terraform Operator) are able to provision resources without having direct access to the secrets.

In this tutorial, you assume the role of both the Vault Admin and the Terraform Operator.

First, as a Vault Admin, you will configure AWS Secrets Engine in Vault.

Then, as a Terraform Operator, you will connect to the Vault instance to retrieve dynamic, short-lived AWS credentials generated by the AWS Secrets Engine to provision an Ubuntu EC2 instance.

Finally, as a Vault Admin, you will remove the Terraform Operator's ability to manipulate EC2 instances by modifying the policy for the corresponding Vault role.

Throughout this journey, you'll learn about the benefits and considerations this approach has to offer.

Warning

Warning! If you're not using an account that qualifies under the AWS free tier, you may be charged to run these examples. The most you should be charged should only be a few dollars, but we're not responsible for any charges that may incur.

Prerequisites

In order to follow this tutorial, you should be familiar with the usual Terraform plan/apply workflow and Vault. If you're new to Terraform, refer first to the Terraform Getting Started tutorial. If you're new to Vault, refer first to the Vault Getting Started tutorial.

In addition, you will need the following:

Terraform installed locally

Vault installed locally

an AWS account and AWS Access Credentials

If you don't have AWS Access Credentials, create your AWS Access Key ID and Secret Access Key by navigating to your service credentials in the IAM service on AWS. Click "Create access key" here to view your

AWS_ACCESS_KEY_IDandAWS_SECRET_ACCESS_KEY. You will need these values later.

Start Vault server

Start a Vault server in development mode with education as the root token. Leave this process running in your terminal window.

Your Vault server should now be up. Navigate to localhost:8200 and login into the instance using your root token: education.

Clone repository

In your terminal, clone the Inject Secrets repository and navigate into the directory. It contains the example configurations used in this tutorial.

This directory should contain two Terraform workspaces — an vault-admin-workspace and a operator-workspace.

Configure AWS Secrets Engine in Vault

In another terminal window (leave the Vault instance running), navigate to the Vault Admin directory.

In the main.tf file, you will find 2 resources:

the

vault_aws_secret_backend.awsresource configures AWS Secrets Engine to generate a dynamic token that lasts for 2 minutes.the

vault_aws_secret_backend_role.adminresource configures a role for the AWS Secrets Engine nameddynamic-aws-creds-vault-admin-rolewith an IAM policy that allows itiam:*andec2:*permissions.

This role will be used by the Terraform Operator workspace to dynamically generate AWS credentials scoped to this IAM policy.

Before applying this configuration, set the required Terraform variable substituting <AWS_ACCESS_KEY_ID> and <AWS_SECRET_ACCESS_KEY> with your AWS Credentials. Notice that we're also setting the required Vault Provider arguments as environment variables: VAULT_ADDR & VAULT_TOKEN.

Initialize the Vault Admin workspace.

In your initialized directory, run terraform apply, review the planned actions, and confirm the run with a yes

Notice that there are two output variables named backend and role. These output variables will be used by the Terraform Operator workspace in a later step.

If you go to the terminal where your Vault server is running, you should see Vault output something similar to the below. This means Terraform was successfully able to mount the AWS Secrets Engine at the specified path. The role has also been configured although it's not output in the logs.

Provision compute instance

Now that you have successfully configured Vault's AWS Secrets Engine, you can retrieve dynamic short lived AWS token to provision an EC2 instance.

Navigate to the Terraform Operator workspace.

In the main.tf file, you should find the following data and resource blocks:

the

terraform_remote_state.admindata block retrieves the Terraform state file generated from your Vault Admin workspacethe

vault_aws_access_credentials.credsdata block retrieves the dynamic, short-lived AWS credentials from your Vault instance. Notice that this uses the Vault Admin workspace's output variables:backendandrolethe

awsprovider is initialized with the short-lived credentials retrieved byvault_aws_access_credentials.creds. The provider is configured to theus-east-1region, as defined by theregionvariablethe

aws_ami.ubuntudata block retrieves the most recent Ubuntu imagethe

aws_instance.mainresource block creates an t2.micro EC2 instance

Tip

We recommend using provider-specific data sources when convenient. terraform_remote_state is more flexible, but requires access to the whole Terraform state.

Initialize the Terraform Operator workspace.

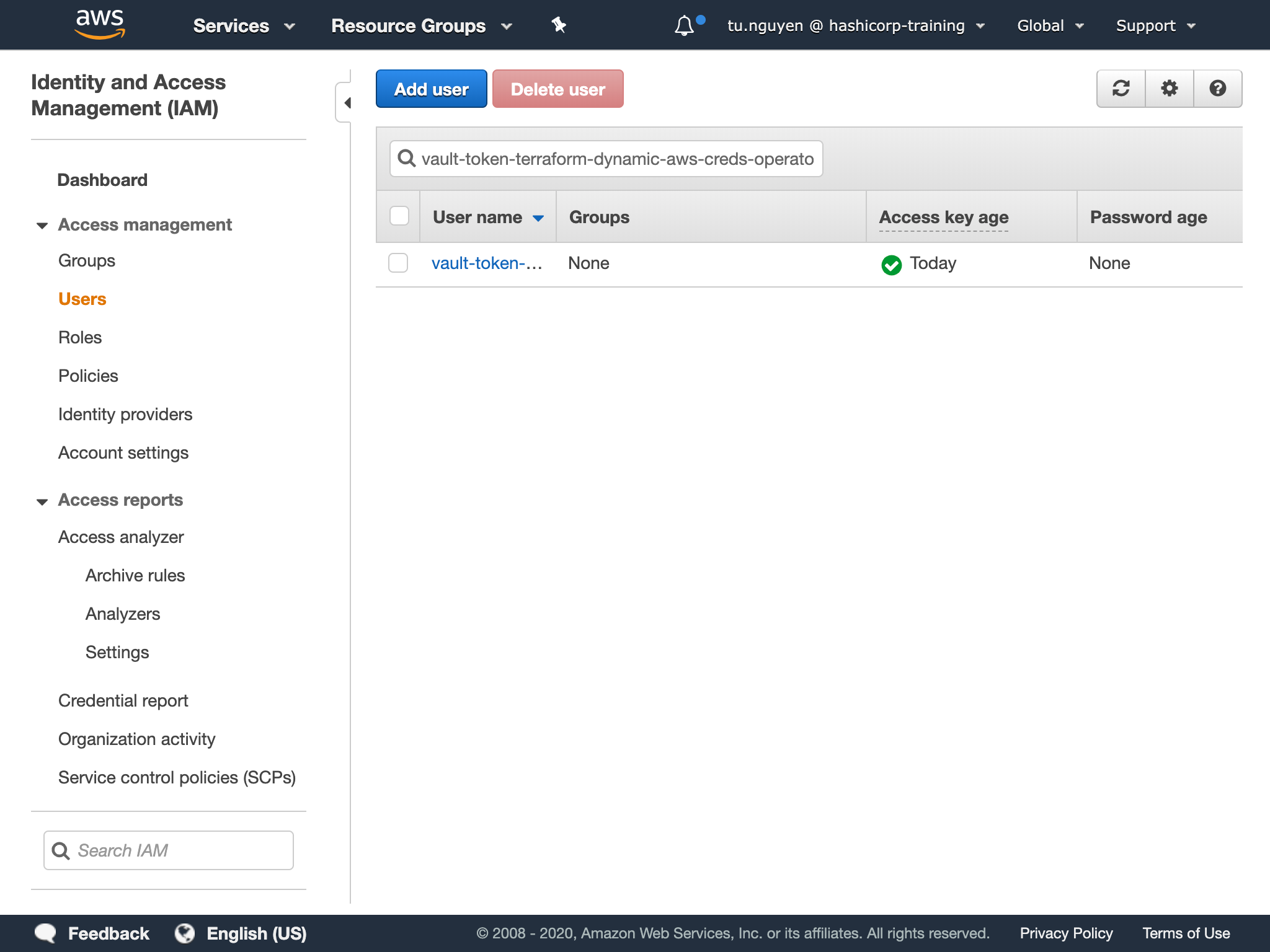

Navigate to the IAM Users page in AWS Console. Search for the username prefix vault-token-terraform-dynamic-aws-creds-vault-admin. Nothing should show up on your initial search. However, a user with this prefix should appear on terraform plan or terraform apply.

Apply the Terraform configuration, remember to confirm the run with a yes. Terraform will provision the EC2 instance using the dynamic credentials generated from Vault.

Refresh the IAM Users and search for the vault-token-terraform-dynamic-aws-creds-vault-admin prefix. You should see a IAM user.

This IAM user was generated by Vault with the appropriate IAM policy configured by the Vault Admin workspace. Because the default_lease_ttl_seconds is set to 120 seconds, Vault will revoke those IAM credentials and they will be removed from the AWS IAM console after 120 seconds.

Tip

The token is generated from the moment the configuration retrieves the temporary AWS credentials (on terraform plan or terraform apply). If the apply run is confirmed after the 120 seconds, the run will fail because the credentials used to initialize the Terraform AWS provider has expired. For these instances or large multi-resource configurations, you may need to adjust the default_lease_ttl_seconds.

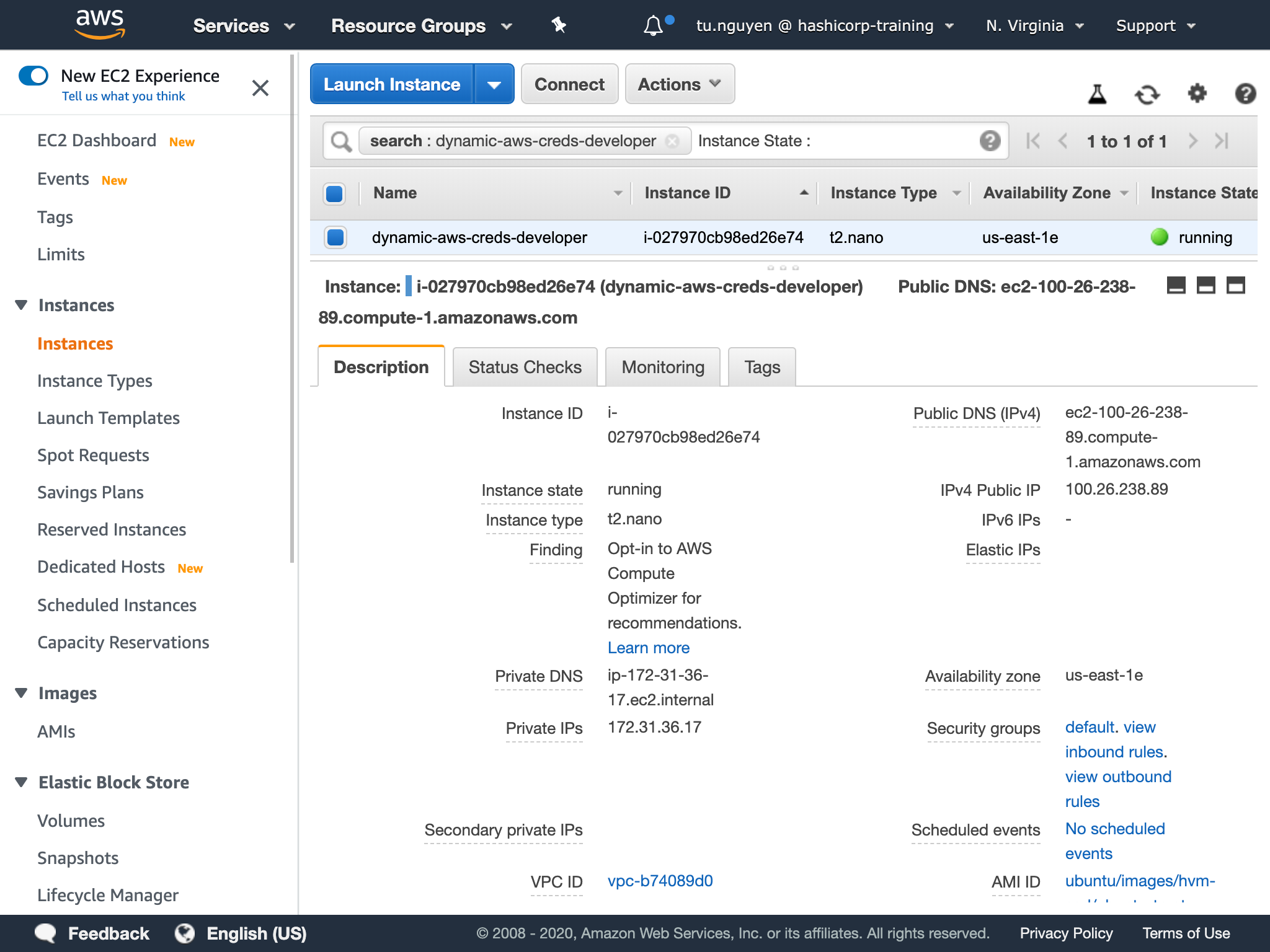

Navigate to the EC2 page and search for dynamic-aws-creds-operator. You should see an instance provisioned by the Terraform Operator workspace using the short-lived AWS credentials.

Every Terraform run with this configuration will use its own unique set of AWS IAM credentials that are scoped to whatever the Vault Admin has defined.

The Terraform Operator doesn't have to manage long-lived AWS credentials locally. The Vault Admin only has to manage the Vault role rather than numerous, multi-scoped, long-lived AWS credentials.

After 120 seconds, you should see the following in the terminal running Vault.

This shows that Vault has destroyed the short-lived AWS credentials generated for the apply run.

Destroy EC2 instance

Destroy the EC2 instance, remember to confirm the run with a yes.

This run should have generated and used another set of IAM credentials. Verify that your EC2 instance has been destroyed by viewing the EC2 page of your AWS Console.

Restrict Vault role's permissions

If the Vault Admin wanted to remove the Terraform Operator's EC2 permissions, they would only need to update the Vault role's policy.

Navigate to the Vault Admin workspace.

Remove "ec2:*" from the vault_aws_secret_backend_role.admin resource in your main.tf file.

This change restricts the Terraform Operator's ability to provision any AWS EC2 instance.

Apply the Terraform configuration, remember to confirm the run with a yes.

Verify restricted Terraform Operator permissions

Navigate to the Terraform Operator workspace.

Run terraform plan. This plan should fail because the Terraform Operator no longer has CRUD permissions on EC2 instances due to changes to the dynamic-aws-creds-vault-admin role.

Benefits and considerations

This approach to secret injection:

alleviates the Vault Admin's responsibility in managing numerous, multi-scoped, long-lived AWS credentials,

reduces the risk from a compromised AWS credential in a Terraform run (if a malicious user gains access to an AWS credential used in a Terraform run, that credential is only value for the length of the token's

TTL),allows for management of a role's permissions through a Vault role rather than the distribution/management of static AWS credentials,

enables development to provision resources without managing local, static AWS credentials

However, this approach may run into issues when applied to large multi-resource configurations. The generated dynamic AWS Credentials are only valid for the length of the token's TTL. As a result, if:

the apply process exceeds than the

TTLand the configuration needs to provision another resource orthe apply confirmation time exceeds the

TTL

the apply process will fail because the short-lived AWS Credentials have expired.

You could increase the TTL to conform to your situation; however, this also increases how long the temporary AWS credentials are valid, increasing the malicious actor's attack surface.

Summary

Congratulations! You have successfully:

- configured Vault's AWS Secret Engine through Terraform,

- used dynamic short-lived AWS credentials to provision infrastructure, and

- restricted the AWS credential's permissions by adjusting the corresponding Vault role

Remember to clean up environment by destroying all resources in both Vault Admin and Terraform Operator workspaces.

Remember to stop your local Vault instance used in this tutorial by hitting Ctrl + C in the terminal window running Vault.

Now that you have inject secrets into Terraform using the Vault provider, you may like to:

Watch a video exploring Best Practices for using Terraform with Vault.

Learn how to codify management of Vault Community Edition and Vault Enterprise.

Learn more about the various Vault secret engines

You can take your security to the next level by leveraging Terraform Enterprise's Secure Storage of Variables to safely store sensitive variables like the Vault token used for authentication.

Learn more about the Terraform Vault Provider.