In Consul 1.9, we are pleased to announce Custom Resource Definition support for configuring Consul on Kubernetes via Kubernetes native manifests. This provides practitioners with a more Kubernetes native workflow, making it easier to configure L7 routing, intentions, service configuration, and proxy defaults configuration using just Kube YAML.

»Overview

One of the main goals we have for Consul on Kubernetes is to provide a frictionless experience for managing a service mesh on Kubernetes. By building Custom Resource Definitions for Consul on Kubernetes, we can enable users to interact with Consul in a way that may be more similar to their existing workflows.

Custom Resource Definitions for Consul have mainly been modeled after Configuration Entries, which allow one to define configuration for various aspects of Consul at a cluster or namespace level. Although configuration can be applied by using the Consul CLI to read and apply an HCL or JSON config file, doing so meant that a Kubernetes user would need to switch often between the Consul CLI and the kubectl CLI tools to configure the service mesh after workloads were deployed.

In order to demonstrate how to use CRDs with Consul on Kubernetes, let us describe a scenario where a fictional mobile app company wants to implement a new feature to its backend API that allows administrators to edit billing statements that are received by users. In order to roll out this new feature, the development team wants to build a new version of the billing service that only allows administrators to edit statements for users. We’ll take a look at how to set up both layer 7 routing and intentions in Consul Service Mesh using CRDs to accomplish the end goal of routing and enforcing traffic correctly between services.

»L7 Routing

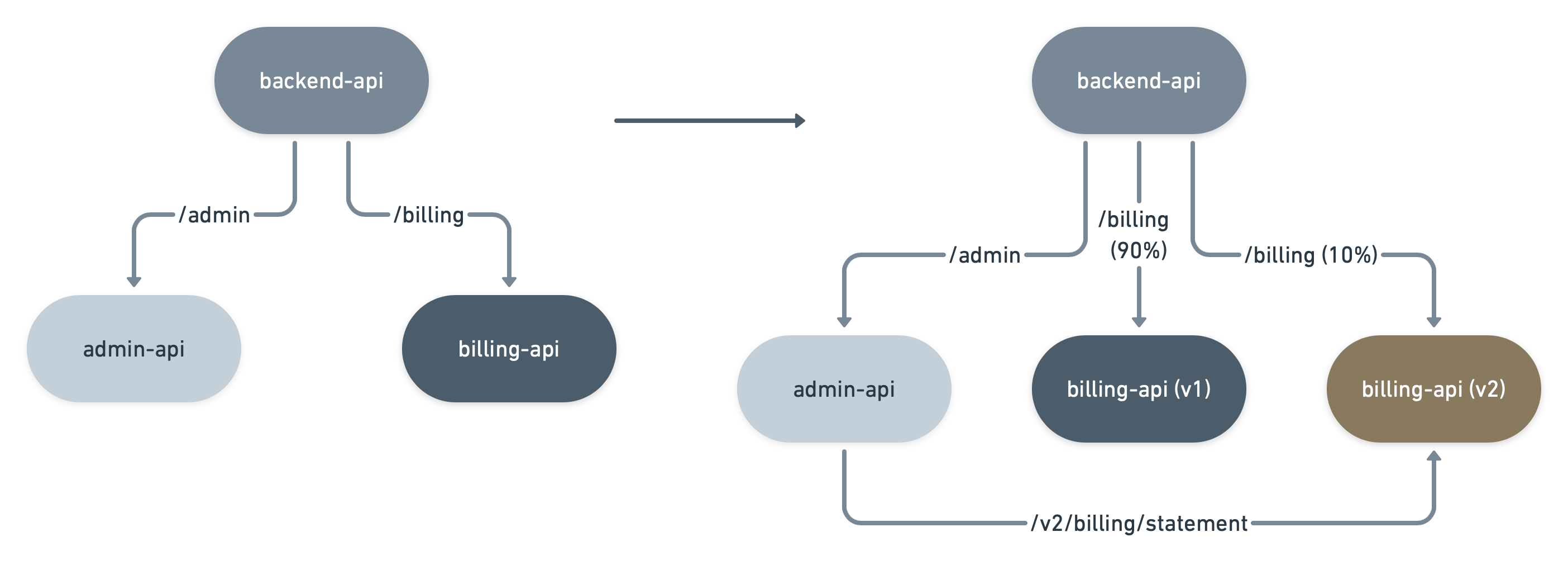

Let’s first take a look at how Consul service mesh is configured to route traffic from the “backend-api” service to the “admin-api” and “billing-api” services.

Below is a simple example of how to leverage L7 routing using the ServiceRouter custom resource. For this example, we are configuring the service mesh to 1) route requests to backend-api with the HTTP path prefix of /admin to the traffic to the admin-api service, and 2) route requests intended for the backend-api service with the HTTP path prefix of /billing to the “billing-api” service.

ServiceRouter CRD for backend-api:

apiVersion: consul.hashicorp.com/v1alpha1

kind: ServiceRouter

metadata:

name: backend-api

spec:

routes:

- match:

http:

pathPrefix: “/admin”

destination:

service: admin-api

- match:

http:

pathPrefix: “/billing”

destination:

service: billing-apiNow the development team wants to deploy a new version of the billing-api service that allows administrators to edit statements. The team can deploy the Kubernetes pods using the same Consul service name and utilize a Consul annotation to specify service metadata to filter on service subsets with metadata that match version = v2. Another option is to create a separate service name and a percentage of the original traffic to the new service.

Below we set up service subsets that filter between two different versions of a service based on the version metadata tag.

ServiceResolver CRD for billing-api:

apiVersion: consul.hashicorp.com/v1alpha1

kind: ServiceResolver

metadata:

name: billing-api

spec:

defaultSubset: v1

subsets:

v1:

filter: "Service.Meta.version == v1"

onlyPassing: true

v2:

filter: "Service.Meta.version == v2"

onlyPassing: true

Next, we can then split traffic between the two versions of billing-api by utilizing the ServiceSplitter CRD, and route only 10 percent of the original traffic to the new billing-api service.

ServiceSplitter CRD for billing-api:

apiVersion: consul.hashicorp.com/v1alpha1

kind: ServiceSplitter

metadata:

name: billing-api

spec:

splits:

- weight: 90

service: billing-api

serviceSubset: v1

- weight: 10

service: billing-api

serviceSubset: v2

»Intentions

Finally, now that all routing has been set up correctly, we want to ensure that only certain services can establish connections with each other based on the service identities and Layer7 request attributes. Starting with Consul 1.9, you will also be able to manage intentions using a ServiceIntention CRD. Note: In order to use the ServiceIntentions CRD, which is currently in Beta, please use a Docker image for Consul 1.9 beta and above.

In this example, we are assuming that the default ACL policy for Consul assumes the deny all policy. We describe that we want to enforce that the only certain admin-api to billing-api traffic be allowed based on HTTP path prefix and HTTP methods made for a request form billing-api. The other service to service intentions are left to the reader as an exercise.

apiVersion: consul.hashicorp.com/v1alpha1

kind: ServiceIntentions

metadata:

name: admin-api-intention

spec:

destination:

name: billing-api

sources:

- name: admin-api

permissions:

- action: allow

http:

pathPrefix: /v2/billing/statement

methods:

- GET

- PUT

description: allow access from admin-api with prefix v2/statement for GET and PUT

»Manage Custom Resource Definitions

After deploying the initial custom resources using kubectl apply, you can continue to manage the custom resource definitions using the same kubectl workflow. Below are examples of using kubectl, which allow you to quickly perform CRUD operations for custom resources built for Consul. More details on other operations can be found in the Usage section of the CRD docs.

List a Consul CRD:

$ kubectl get serviceresolver billing-api

NAME SYNCED

billing-api True

Describe a Consul CRD:

$ kubectl describe serviceresolver billing-api

Status:

Conditions:

Last Transition Time: 2020-10-09T21:15:50Z

Status: True

Type: Synced

»Conclusion

Consul on Kubernetes now provides a more native Kubernetes user experience due to the release of Custom Resource Definitions for Consul. In the future we anticipate further Custom Resource Definitions to be available for Consul. We also anticipate that as more configuration entries get added for Consul we will also provide a corresponding CRD. We hope you enjoy using a more Kubernetes native approach to using Consul. Please see the following Learn Guide tutorial and docs to get started.